IQT Labs recently audited an open-source deep learning tool called FakeFinder that predicts whether a video is a deepfake.

In a previous post, we wrote about our audit approach, which was to use the Artificial Intelligence Ethics Framework for the Intelligence Community as a guiding framework and examine FakeFinder from four perspectives — Ethics, the User Experience (UX), Bias, and Cybersecurity. In this post we dive into the Ethics portion of our audit.

——

With many different ways that AI can fail, how do we know when an AI tool is “ready” and “safe” for deployment? And what does that even mean? How much “assurance” do we need? How much risk is tolerable? Who has the (moral? legal?) authority to impose that risk? Who can they impose it upon? And who deserves a seat at the negotiating table where all these questions are discussed and debated?

None of these questions have easy answers. And at the start of our audit, I kept thinking about something Michael Lewis wrote in his book “The Undoing Project,”that we are all “deterministic device[s] thrown into a probabilistic universe.” We want assurance just like we crave certainty, but unfortunately, the world doesn’t always cooperate.

Ryan and I figured that complete assurance wasn’t a realistic goal. It isn’t possible to foresee all future uses (and misuses) of a technology and as a result, we couldn’t expect to identify (let alone prevent) all future harms imposed by a tool. We also believed that the possibility of un-identified future harm wasn’t (necessarily) a reason to reject an AI tool like FakeFinder.

We did, however, take it as a given that before an organization deploys a new tool, that organization has a responsibility to consider the ethical implications of doing so — and to weigh the risks and benefits of using the tool against the risks (and benefits) of not using it. For a technical team like ours, identifying ethical concerns and thinking in terms of risk required a shift in mindset — instead of looking at FakeFinder in terms of its technical performance, we had to consider its potential to impose real-world harms on different stakeholders and constituents. So we asked ourselves, how could we do this in a rigorous, structured way?

First, we narrowed the scope of our assessment to a specific use case for FakeFinder: we imagined an intelligence analyst sifting through found videos, in some scenario where manipulated video content would warrant additional scrutiny. Then, we used an ethical matrix to help us identify areas of concern. The ethical matrix is a structured thought exercise described by Cathy O’Neil and Hanna Gunn in “Near-Term Artificial Intelligence and the Ethical Matrix” (a chapter from S. Matthew Liao’s recently published volume, “Ethics of Artificial Intelligence.”) The exercise focuses on three ethical concepts: autonomy, well-being, and justice and is designed to help people without formal training in ethics (like Ryan and me!) think counterfactually about how an AI tool might impact different stakeholders in different ways.

To do this exercise, you begin by drawing a matrix with three columns, one each for autonomy, well-being, and justice. Then you identify different stakeholders that might be impacted by the AI tool you are considering and place each stakeholder into their own row. Next, you challenge yourself to fill out the entire matrix, thinking through how each stakeholder group could be impacted with regards to each ethical concept.

Our starting point was an empty matrix with six stakeholder groups: the FakeFinder team, the deepfake detection model builders, future users of FakeFinder (who we imagined to be intelligence analysts), people captured in the videos in the training dataset, people appearing in videos uploaded to FakeFinder, and Facebook, the data owner who curated the training dataset and organized the Deepfake Detection Challenge.

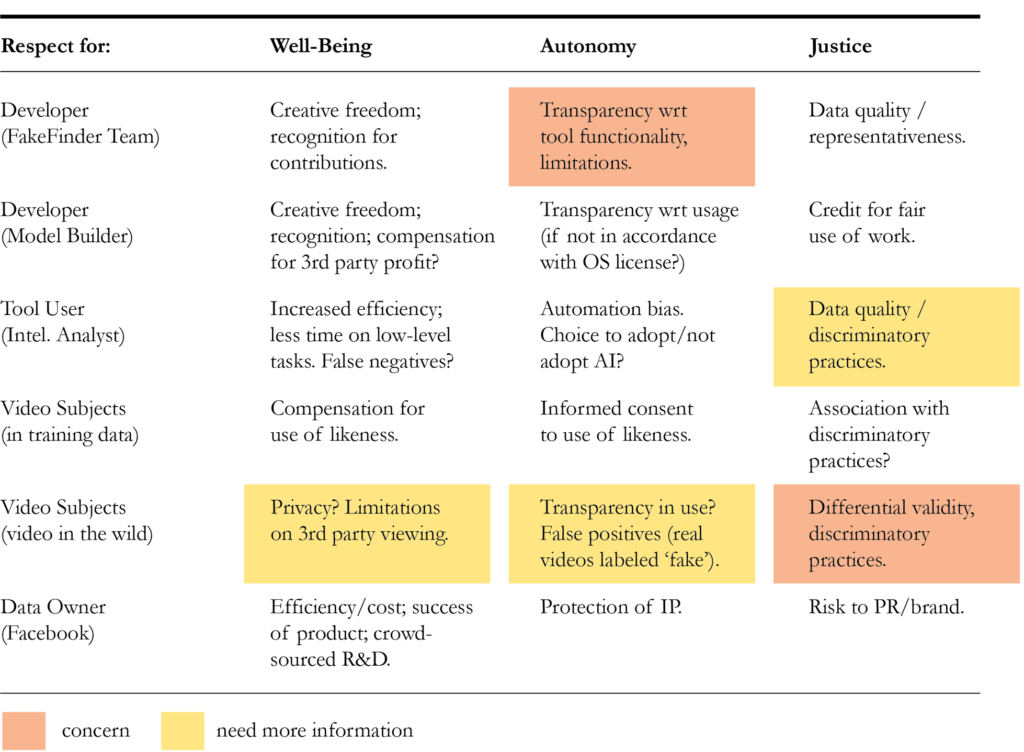

To help us fill out the matrix, we interviewed FakeFinder’s development team about their design goals and the risks they considered while developing FakeFinder. Here is our completed matrix:

We applied colored tags to highlight concerns. Yellow indicated issues that needed further consideration and red highlighted what we saw as primary concerns.

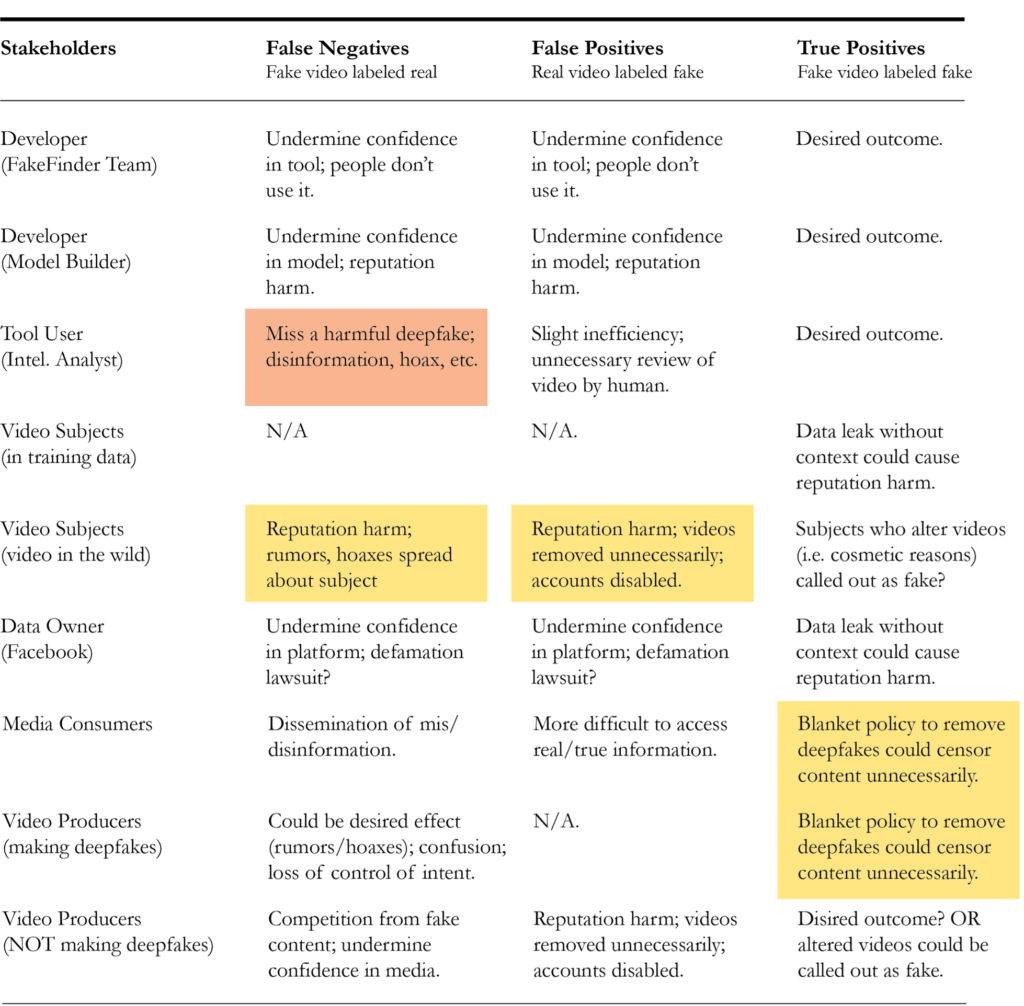

We could have stopped at this point, but we were thought there may be additional ethical concerns that we hadn’t considered. So, we shared this initial version of the matrix with several people who had no connection to FakeFinder — legal counsel, a mis/dis-information expert, a specialist in AI policy, and a media ethicist – and asked them what we had overlooked. Based on these discussions we constructed a second version of the ethical matrix, this time concentrating on false negatives and false positives, and incorporating additional stakeholder groups (that we hadn’t previously considered): media consumers and video producers.

In our estimation, the primary benefit of FakeFinder is increased efficiency in the identification of deepfakes. This would benefit people using the tool as well as the organizations they work for. It is possible to use non-AI methods to identify deepfakes, and in fact, this is how things are done today. However, it is not feasible for human analysts to review all available video content and manually check for deepfakes. Automating this task would enable greater speed and scalability of video review and could also lead to other positive downstream impacts, such as labeling or removing harmful deepfakes from a broader media ecosystem.

While there may be still other ethical concerns we have not considered, we view these ethical matrices as artifacts of the thought process that led us to the conclusions summarized below.

Two primary risks we identified were:

- A lack of transparency surrounding the criteria that FakeFinder uses to identify deepfakes

- The potential for differential outcomes and different groups video subjects. This could pose a significant concern in terms of justice / fairness, if the tool resulted in differential outcomes with respect to protected classes, such as race or gender. In the Bias portion of our audit (which we will discuss in our next post) we looked into this concern in much more detail.

As part of our audit, we also made some suggestions for how to mitigate these risks:

- Modifying FakeFinder’s user interface to make detection criteria clearer to users.

- Since the results of our bias testing did indicate some areas for concern, we recommended that this bias should be remedied before using FakeFinder in a production setting.

We also recommend that an organization looking to use FakeFinder develops a policy around how results should and can be used. At a minimum, this policy should recognize the following:

- That it is not possible to fully characterize which types of manipulations FakeFinder will identify as “fake”;

- That video producers might generate deepfakes for a wide variety of reasons, not all of which are nefarious; and

- That FakeFinder’s predictions are probabilistic, not deterministic. As such, specifying thresholds in advance would be helpful. (i.e., How confident must a prediction be before someone is authorized to act upon that information?)

Stay tuned for the next post of our AI Assurance blog series where we further explore the Bias portion of our audit.