This is the third post in a series of blogs detailing the development process of the AI Sonobuoy project by IQT Labs. You can find the first one here and the second one here.

In this blog post, we cover the evolution of the hardware used in the project and the corresponding software changes we made at each stage.

High-Level Overview

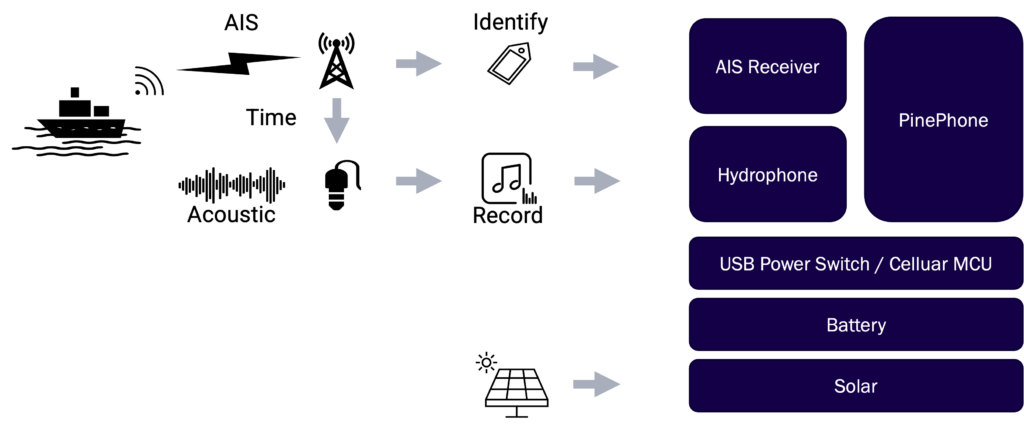

The AI Sonobuoy Collector system creates an auto-labeled underwater audio dataset for machine learning.

It has been developed, tested, and deployed on several compute platforms, each of which has a revised software stack. In this piece, we explore the various iterations of the system.

Through every version, we gained a deeper understanding of system requirements and of the challenges of maritime deployment. Every iteration was developed with a focus on leveraging low-cost, readily available, and easy-to-use components. Furthermore, each successive version incorporated the lessons learned from previous ones.

Collector Hardware

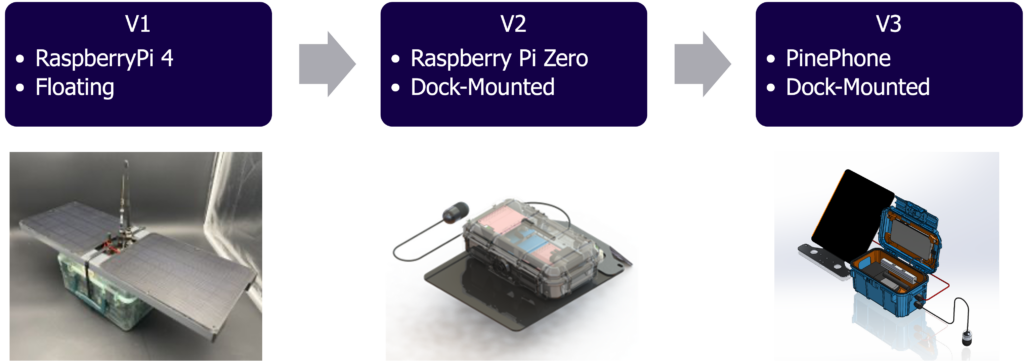

The Collection platform, the self-labeling dataset-creation unit situated at the beginning of the machine learning pipeline, underwent three major iterations during the development of the project.

The first version was organized around a Raspberry Pi single board computer housed in a large, dry box that was intended to be moored in the water. Waterproofing the system with only a dry box proved to be a challenge—especially due to the connection points of the external antennas.

We reassessed our approach and concluded that usable datasets could still be derived if we mounted our collection system to a platform that was already floating—like a dock or buoy.

The second version was designed to be mounted to a dock with the hydrophone hanging in the water. This system, which was also Raspberry Pi -based, leveraged a general-purpose input-output header pin expansion board, allowing the HATs (add-on peripherals) to fit in a smaller box. This hardware design worked as intended, but it was complex and labor-intensive to build and configure due to the number of custom components required.

Our third and current iteration is still dock-mounted but leverages a PinePhone (Linux cell phone) and a similar dry box housing. Using a cell phone that has battery management and wireless connectivity already built in makes constructing the system much simpler.

In total, the three Collection systems shown above have been deployed for over nine months and have recorded underwater audio and AIS data the entire time. Although we have not optimized power utilization, our V3 system draws <7W operating at full power. When combined with a battery and solar panel, the system can be used continuously.

We noted earlier that the PinePhone Collection system design allows for plug-and-play utilization of readily available USB hardware components. The following list summarizes what we used:

- PinePhone Pro – Linux cellphone

- WEGMATT dAISy 2+ –Dual-channel AIS receiver

- Aquarian H2a Hydrophone – Low-cost commercial hydrophone

- Voltaic v50 Battery – USB battery, always-on, solar charging

- Voltaic Solar Panel – 20W 6V solar panel

Collector Software Evolution

The software powering the AI Sonobuoy was upgraded alongside every physical iteration of the system. Each software revision was meant to increase the system’s availability and maximize the fidelity of data it recorded. The modifications were also designed to ensure the AI Sonobuoy platform aligned ever more closely with our goals of building widely available and open-source systems.

Earlier versions of the code took a component-first approach to the software, as opposed to a data-first one. Over time, we moved towards a model focused on maintaining the scalability, consistency, and connectivity of data as it moved throughout the system. (We’ll share more details about this in a future post in the series.)

As with other robotics applications, we used a centralized, bus-based architecture called MQTT. We first adopted a centralized bus in our Teachable Camera project and it has also been used in the SkyScan project, allowing for interoperability between systems. Our revisions to the AI Sonobuoy Collector have strengthened our conviction that a centralized bus is still an effective and robust method for designing collection systems.

Throughout the development of these platforms, each of the functional modules which the AI Sonobuoy relies on was also containerized to enable greater cross-platform reusability and to increase fidelity of the overall system. Given the high level of abstraction in open-source software, containerizing functionality provides the simplest means of creating a reliable system without a single point-of-failure.

IQT Labs Perspective

The AI Sonobuoy Collector underwent several hardware and software iterations during this project. Each version was incrementally built towards our overall goal of creating a robust acoustic dataset labeling and identification system. Most software-defined sensor platforms can be distilled into a phone with sensor and power peripherals. We concluded that the early iterations of our AI Sonobuoy hardware mimicked the capability of a Linux-based phone, so the obvious next step was to just use one.

Introducing the PinePhone greatly simplified our system design. Its Linux operating system meant that it was compatible with our prior work and behaved similarly to platforms we were already familiar with. It did, however, come at the cost of inheriting the technical debt baked into the phone. As we simplified our hardware, we did the same thing with our software, while increasing its robustness by implementing redundancies, simplifying modules, and standardizing implementations.

Stay on the lookout for an upcoming blog about “Data-First Software Architecture” to learn more about how we are writing software for the Edge.